Your attribution measurement is not broken, it is a feature - #QN21

We need higher standards for marketing measurement than looking at where the user was clicking before arriving at a website or app.

Still today, many marketers, finance executives, and leadership teams in companies of all sizes, especially those with a large digital ecosystem, gather together and seem to agree on the following: “We are not comfortable with our online marketing, numbers do not match in the different tools or with our internal data. Measurement is broken and we need to fix it.”

When digging deeper, the main solution that has been driving their digital marketing decisions for the last 15 years is the one they are now trying to fix. They believe there is technical work to do. We must stitch more IDs together, build better models, capture more touchpoints or ignore consent. Then, we will finally be able to scale digital marketing confidently.

But here’s the uncomfortable truth: your attribution measurement is not broken. It’s working exactly as designed. The problem is that it was never designed to tell you whether your marketing is actually working.

The precision trap

The promise of digital marketing was measurement precision. Every click tracked. Every conversion attributed. Every euro accounted for.

This promise created a generation of marketers who believe that if they could just see the full user journey, every touchpoint, every interaction, every click before conversion, they would finally understand what’s working. It even worked really well for growth marketers in some cases.

The result? Companies have spent years building increasingly sophisticated attribution systems:

Multi-touch attribution models that assign fractional credit across touchpoints

Data science teams dedicated to stitching IDs across devices and browsers

Elaborate systems to capture “view-through” conversions with a high dosis of illusion

Machine learning models trained on post-click behavior

All of this effort to answer one question: “Which of my marketing activities caused this conversion?”

The problem is that this question has a fundamental flaw. Attribution models don’t measure causation. They measure coincidence.

With a limited activity in digital marketing channels, this coincidence can be correlated with causation. However, growing companies get stuck in these measurement systems and they fall in the trap forever. Do you see yourself here? Read on.

The coincidence problem

When a user clicks on an ad and later converts, attribution systems record this as evidence that the ad “worked.” But did it?

Consider the following scenarios:

A user searches for your brand name, clicks on your paid search ad, and converts. Would they have converted anyway through organic search?

A user sees your retargeting ad after abandoning a cart, clicks it, and completes the purchase. Were they already coming back to buy?

A user clicks on a social media ad and converts three days later. Did the ad influence them, or the decision was already made?

In each case, the attribution system faithfully records the conversion and assigns credit. But none of this tells you whether the advertising caused the conversion or merely captured someone who was already going to convert.

Attribution doesn’t measure the impact of advertising. It measures the efficiency of capturing existing demand. How long will you keep making budgeting decisions based on a flawed attribution model?

The Universal Analytics legacy

Much of today’s attribution obsession traces back to a single product: Google Analytics, specially its Universal version.

For over a decade, Google Analytics defined how companies measured digital marketing. It tracked users across sessions, assigned conversions to channels, and produced reports that became the lingua franca of digital marketing. Marketing teams built their entire measurement infrastructure around it.

When Google sunset Universal Analytics in 2023 in favor of Google Analytics 4, something interesting happened. Instead of questioning whether the measurement paradigm itself required a shift and the adoption of new mechanisms, including the modeling capabilities of Google Analytics 4, many companies just wanted continuity and replicated their legacy systems either on top of Google Analytics 4, building their own tracking systems, trying to emulate Universal using Piwik, Amplitude or Mixpanel, ignoring these changes in the ecosystem.

They invested heavily in:

Customer Data Platforms (CDPs) to stitch identities across touchpoints

Unclear first-party data strategies to replace third-party cookies

Server-side tracking to capture what browsers no longer allowed

Maintaining the Universal Analytics data schema, or adapting Google Analytics 4 to legacy tracking standards

Complex ID graphs to maintain the illusion of user-level tracking

The goal? Recreate the Universal Analytics world with new tools. Making things worse, those using data from BigQuery (only available in the paid version with Universal, now available also in the free version) tried to replicate the same reports with Google Analytics 4, neglecting the idea that this is not the best dataset for assessing marketing performance anymore. Many are still hitting their heads in the wall with memories of a “better past” and blaming Google Analytics as the source of their problems.

But this misses the fundamental problem. The issue wasn’t that Universal Analytics stopped working, it’s that the approach that Google Analytics popularized will never answer again the questions that matter for growth.

By trying to preserve an outdated measurement paradigm, companies have created increasingly complex systems that:

Consume enormous resources in data engineering and infrastructure

Focus measurement on demand capture rather than demand creation

Create a false sense of precision that doesn’t reflect marketing’s true impact

Prevent marketing from becoming a growth engine by optimizing for the wrong metrics

The companies that will thrive aren’t the ones that successfully replicated Universal Analytics metrics. They’re the ones that recognized the paradigm shift and built measurement systems designed for growth, not for tracking.

Why “fixing” attribution makes things worse

The natural response to attribution’s limitations is to try to fix its gaps, include post view paths to conversion, add CRM IDs to increase the cross device coverage. Build better models, capture more data. In some cases, even ignoring consent requirements for the highest goal of having an ID for every user so reports can continue spitting out some data.

But this misses the point entirely.

More sophisticated attribution doesn’t solve the coincidence problem, it obscures it. When your data science team builds an elaborate multi-touch model that assigns 23.7% credit to a display impression and 34.2% to a paid search click, it creates an illusion of precision that doesn’t exist.

Worse, it diverts resources from what actually matters: understanding the true incremental impact of your marketing.

As Les Binet famously said: “You don’t need a tool to measure your marketing impact. You need a science.”

The difference is crucial:

A tool processes data and produces reports

A science establishes causal relationships through rigorous methodology

Attribution is a tool. What you need is marketing science, which existed before digital attribution. And it worked.

Before we could track clicks, marketers used:

Sales data: Did sales increase when we advertised?

Market research: Did awareness and consideration improve?

Econometric modeling: What’s the statistical relationship between media spend and business outcomes?

Experiments: What happens when we turn advertising on or off in different regions?

These methods didn’t require tracking individual users. They didn’t need to stitch IDs across devices. They measured what actually mattered: did the advertising grow the business?

The digital measurement revolution didn’t improve on these methods—it distracted from them. We became so obsessed with tracking individual user journeys that we forgot to ask whether the aggregate investment was working.

The CFO problem

For the past 15 years, marketing teams have been reporting attribution numbers to CFOs, boards, investors, and private equity firms. These reports looked sophisticated: detailed breakdowns by channel, precise ROI calculations, beautiful dashboards.

But they are built on a flawed foundation.

Now, as the limitations of attribution become more apparent via cookie limitations, privacy regulations, platform walled gardens, consent limitations, fragmentation of media and many other reasons, CFOs are asking uncomfortable questions:

“If our attribution was accurate, why do our MMMs show different results?”

“If paid search is so efficient, why doesn’t scaling it drive proportional growth?”

“If our digital ROAS is 5x, why isn’t our business growing faster?”

The reality is that CFOs have been fed a version of marketing performance that systematically:

Overvalues lower-funnel activities and remarketing that capture existing demand

Undervalues upper-funnel activities that create future demand

Ignores the offline impact of digital advertising

Misses the counterfactual: what would have happened without the advertising

This isn’t a measurement error. It’s a fundamental misunderstanding of what attribution measures and how marketing decisions should be made.

What you should do instead

If attribution isn’t the answer, what is? Here’s a framework for marketing measurement that actually works:

1. Start with incrementality, not attribution

The fundamental question isn’t “which touchpoints contributed to this conversion?” It’s “how many conversions happened because of my marketing that wouldn’t have happened otherwise?”

This requires experiments:

Geo-lift tests: Run campaigns in some regions but not others, measure the difference

Holdout tests: Exclude a portion of your audience from advertising, compare outcomes

Platform incrementality studies: Use tools like Meta’s or Google’s Conversion and Brand Lift

2. Use Marketing Mix Modeling (MMM) for strategic decisions

MMM doesn’t try to track individual users. It uses aggregate data—media spend, sales, external factors—to estimate the contribution of each marketing channel.

Modern MMM tools like Google’s Meridian make this accessible to more companies than ever.

3. Accept that some things can’t be precisely measured

Not everything that matters can be measured precisely, and not everything that can be measured precisely matters.

Brand building is notoriously difficult to attribute but demonstrably effective. The inability to track it doesn’t mean it doesn’t work—it means your measurement system isn’t capturing what matters.

4. Educate your CFO (and yourself)

The CFO-CMO gap isn’t just about language—it’s about fundamental misunderstandings of what marketing measurement can and cannot tell us.

Have honest conversations about:

The difference between attributed and incremental value

Why efficient-looking channels might not be driving growth

The role of brand building in long-term business value

As Google’s research on CMO-CFO alignment notes: “Effective collaboration between CMOs and CFOs relies on a mutual understanding of each other’s work.”

This is not only about education, this is also about trying to look into the feature without the lens of the past, and let go of company traditions without blaming each other. Admit that the world has become increasingly complex and measurement standards need to adapt. Nobody is wrong, let’s get better together!

The feature, not the bug

The way that cross channel attribution has traditionally worked isn't broken. It's doing exactly what it was designed to do: track post-click user behavior and assign credit to touchpoints.

The problem is that we’ve been using this tool as if it measured marketing effectiveness, when it actually measures something else entirely: the efficiency of capturing people who were already close to converting.

This doesn’t mean tracking and attribution are useless. They serve a real purpose: continuous optimization of digital marketing tactics and ongoing performance monitoring. Attribution helps you understand which creatives perform better, which audiences engage more, and where your campaigns might be leaking efficiency. This has an even greater value when post-view activity is tracked, mostly possible within the “walled gardens” and it is used to drive campaign optimization. Know its limitations in cross channel measurement, adjust conversion values to align more closely to your business and you will have a proper data feed to fuel your ai driven campaigns in advertising platforms. However, in-platform attribution will never tell you the truth about the cross channel impact of your marketing.

Attribution cannot inform budget allocation, measure incrementality, or establish causality. For those decisions, attribution data is directional at best—a signal, not an answer.

Understanding this distinction is liberating. It frees you from the endless pursuit of “fixing” attribution and allows you to focus on what actually matters: understanding whether your marketing is growing your business.

The companies that will win in the next decade aren’t the ones with the most sophisticated measurement implementations. They’re the ones that understand the limitations of each methodology and have built measurement frameworks and system that capture what truly matters.

Your attribution measurement isn’t broken. But your expectations of what it can tell you might be.

Industry Updates

McKinsey: CMOs and CFOs must unite on marketing ROI

A recent McKinsey report warns that companies may be spending millions on marketing campaigns without understanding their direct business impact. The report calls for CMOs and CFOs to collaborate more closely, using AI-powered analytics to bridge the measurement gap. Without clear ROI frameworks, marketing technology risks becoming “a digital money pit.” Read more

Meta on calibrating MMM with incrementality

Meta has published guidance on how to calibrate Marketing Mix Models using incrementality experiments. MMM provides the strategic view, while incrementality tests provide causal validation. Read more

Video: How to make marketing analytics a true business partner

This Dominic Williamson’s conversation with Recast’s Michael Kaminsky on stopping measurement that kills growth is a great watch. His core argument: measurement systems optimized for precision often sacrifice growth for accuracy, which goes against what marketing measurement is all about.

AI shopping agents break the attribution loop

As AI shopping assistants become more prevalent, traditional attribution is facing an existential challenge. When an AI agent recommends and purchases a product on behalf of a consumer, there’s no “last click” to attribute. Marketers must now ensure their brands are known to AI systems—a fundamentally different challenge than optimizing for clicks. Read more

BCG’s four-legged approach to marketing ROI

BCG has published a framework advocating for multiple measurement approaches: Marketing Mix Modeling, incrementality testing, attribution (used appropriately), and brand tracking. The key insight? No single methodology captures the full picture. Companies that triangulate across methods make better decisions. Read more

System1 and Effie: The Creative Dividend

System1 and Effie have published The Creative Dividend, a new book analyzing 1,265 campaigns representing $139 billion in market share, combined with creative testing data from 200,000+ consumers.

The headline finding: creativity and media together account for 60.1% of campaign business results on average—and up to 98.3% in some categories. Neither works in isolation.

The book introduces Excess Share of Creativity (ESOC): a metric capturing how much creative advantage actually enters the market once media support is considered. The key insight? Profit doesn't rise linearly—it accelerates. As ESOC increases, the likelihood of reporting profit growth increases exponentially.

The implication is counterintuitive but powerful: "good enough" creative is often the most expensive choice. Mediocre creative requires more media spend to achieve the same results. Great creative multiplies the return on every media dollar.

For marketers still fighting budget battles, this provides the evidence: cutting creative quality to save money often destroys more value than it saves. The barrier isn't belief—41% of marketers say creativity is seen as a risk. The barrier is confidence in proving it works.

Download the book

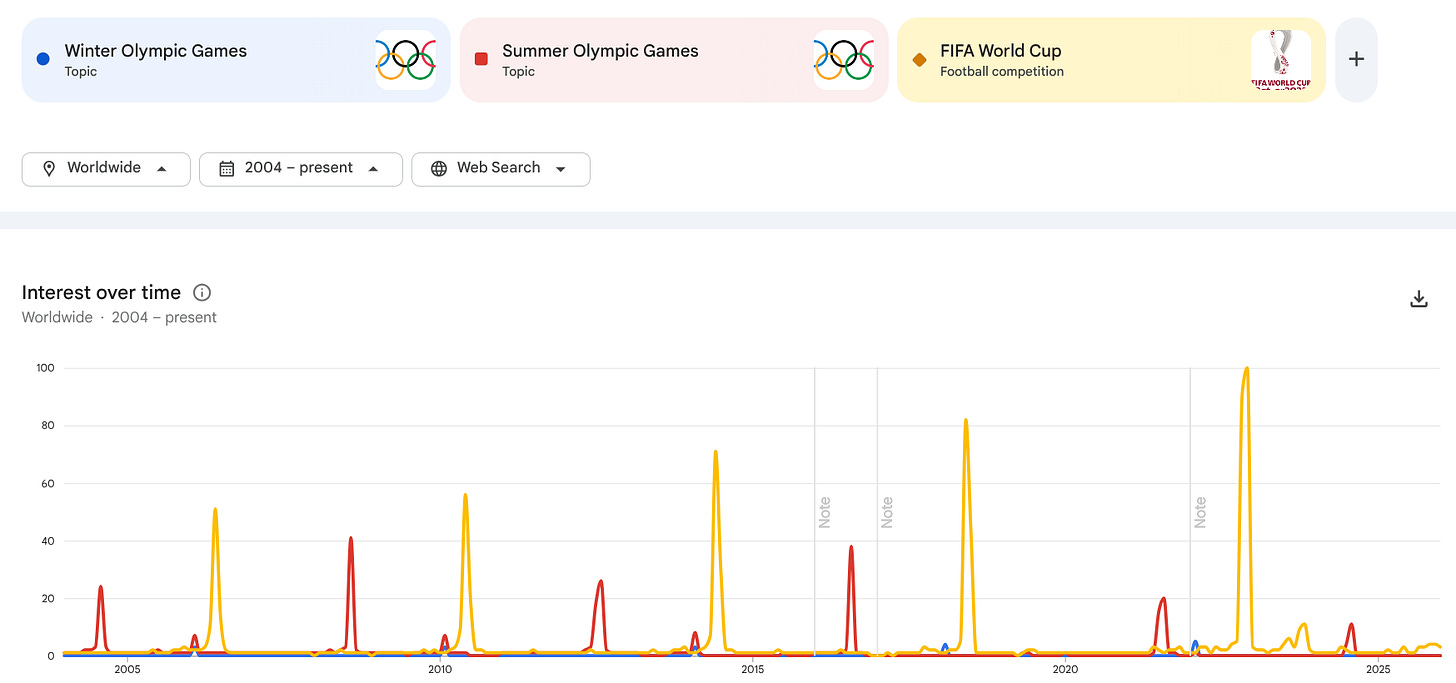

Chart of the week

Winter Olympics happened a few weeks ago in Italy. It’s hard to assess popularity unless we compare to other topics. Below the Google Trends searches of 3 different sports events that happen on 4 year period. Indeed football is a massive sport!

Oldies but Goldies

John Wanamaker’s immortal quote (and its modern interpretation)

Half the money I spend on advertising is wasted; the trouble is I don’t know which half.

This quote, attributed to department store magnate John Wanamaker in the late 1800s, has been used for over a century to justify better measurement. The irony? Attribution was supposed to solve Wanamaker’s problem. It didn’t.

Attribution tells you which clicks preceded conversions. It doesn’t tell you which advertising was wasted. In fact, it often leads to the opposite conclusion—that the advertising which attributes best (lower-funnel, demand capture) is the most valuable, when in reality the advertising that attributes poorly (upper-funnel, demand creation) may be doing the heavy lifting.

Wanamaker’s quote remains relevant not because we lack measurement tools, but because the fundamental challenge of understanding advertising’s true impact requires more than tracking clicks. It requires controlled experiments, statistical modeling, and a willingness to accept uncertainty.

A century and a half later, the marketers who embrace this uncertainty—who build measurement systems designed to learn rather than to prove—are the ones who will finally know which half is working.

Let us know, what are you thinking?